May 2020

Chris Langan’s CTMU is a metaphysical/philosophical theory of everything, a “theory of theories” in another term. It explains the deep nature of reality, its emergence, where it is headed and what its purpose is.

The theory is infamous for being difficult to understand and this has unrightfully drawn (in place of fair discourse) ad-hominem scorn by the material reductionist culture that permeates our modern time. But as I will hope to show (if one merely has an open mind) the core ideas are easy to grasp but grappling with the implications of them is a gateway that will require eager study of foundational concepts and of the theory itself in order to enter.

If you want to skip my attempt at an easier explanation the full paper is available here: http://hology.org/ as it has been since 2002.

Without further opening, let’s get to it.

The Birth of the Universe.

Predating the Big bang (what is understood to be the birth of the universe) Physicists, Philosophers and Theologians alike have all sort to explain (with varying degrees of success) what existed before the universe and what gave rise to it (what premeditated it). For philosophers, many would be forced to admit that it could not literally have been nothing that predated and gave rise to the universe (nothing as in no single thing).

Everything that begins to exist has a cause, the main source of controversy over that premise is whether the cause is external or internal (that is whether it is self caused or exo-caused (caused by something else)). Something that I think we can all agree on is something must exist in order to generate a cause. This seriously rules out the pre-big bang period as a place where no single thing existed, and makes expressly clear that something had to be there.

That something takes on the nature of what physicists call Quantum foam. Defined as a quantum theory of gravity where spacetime would have a foamy, jittery nature and would consist of many small, ever-changing, regions in which space and time are not definite but fluctuate.

As you can tell from its above description quantum foam sounds very undefined, in the sense that no space time or any type of structure has been defined in it. We can see this is a reasonable description to give it since the universe, its space and time structure (our reality) had yet to be defined so logically before it was defined it was undefined. Simple and undeniable.

Enter UBT:

Quantum foam actually gives way to a more rich concept found in CTMU known as Unbound Telesis (UBT) literally meaning, unbounded purpose. UBT is very much like Quantum foam in that it is a purely undefined realm free of informational constraint, that is, it is completely unconstrained and is capable of taking on the nature of any definition, meaning, ontology or structure.

In order to really get a sense of this concept, picture if you will, the laws of physics suddenly being something else, energy no longer equals mass by the speed of light squared, every force no longer has an equal and opposite force, the electron mass could be anything. Similarly for logic, imagine sentential logic not being defined, first order logical statements like x = x can’t exist because x and even propositions themselves have not been defined.

In UBT x can be x ∧ ¬ x. that is x can be equal to itself and not equal to itself at the same time, a contradiction. If you imagine this scenario through and through one can come to see UBT as sort of a realm of pure paradox, where structure and being have not been defined because the rules for their existence need to be defined and bounded first.

So what defined the rules for our universe’s existence? The answer must be it itself does, it self causes them, since for an external cause to generate reality it has to affect reality, and thereby it is contained within reality itself since reality contains all that is real.

A cause cannot truly be separate from the effect it creates, since for a cause to generate an effect it has to exist in a relational medium that allows it to do so (a medium that relates cause and effect) and that medium must be reality. So we can see external causes cannot be distinctly separated from reality, because they require interaction with reality in order to cause it (implying they must be similar enough to reality in order to effect it, if they are completely unlike reality, then the cause cannot interact with reality in order to cause it) making reality “self causing” or as it emerges from UBT “self defining”.

But why did it pick the laws of physics and logic as its foundational rules? The answer, it has to conform to logico-mathematical consistency in order to have a stable structure and not collapse under its own self contradiction. A helpful primer for understanding this more is here: https://youtu.be/edwYu20SMFc

If reality anywhere contradicts itself (the laws of physics variate, the laws of logic variate) then it would have collapsed already (e.g much, much sooner then later). Because if nature isn’t always uniform, the mechanism that controls its uniformity would be an arbitary one (a mathematically random one) instead of a chosen one, meaning the universe would be maximally entropic, destroying itself instantly.

Thus the problem of induction in science, where by nature is only assumed to be uniform, is solved by the deduction that nature must be uniform, otherwise none of these sentences and thoughts that you and i are having would exist.

Imagine you have a friend called Dan who is 6’2, in this reality you can perceive him as being 6’2 because there exists laws that allow perception to be possible. Now picture an alternate reality or world that could exist where you can perceive Dan as being 6’2 and 5’8 at the same time, a contradiction. Such a world cannot form from UBT because it is incoherent, it is self contradictory or wholly paradoxical in nature. If even one part of a system is contradictory then the entire system is self contradictory and therefore would not be able to sustain itself and would collapse, preventing it from being a reality where observers can form and therefore making it impossible for it to be a reality that we could be in.

This means reality must be setup to allow observers to arise within it, implying mind determines reality. And since those observers cannot be separated from reality (because they are in it) they are in a literal sense, reality in the act of observing itself.

Coherency Is Good, Incoherency is evil.

With a final word on UBT, since it always exists (is always present) because it is the the logical negation of logic (Logic’s antithesis) it exists as a neccessary complement to logic, and reality is constantly defining itself from out of it.

This can be understood in more poetic terms as the absence of light is darkness, or darkness is the absence of light. It follows onward that reality is in a process of choosing what it is (which always involves choosing what it isn’t). That is, it is deciding itself by negation, by negating itself.

The problem of evil can be resolved then by understanding that evil is whatever is incoherent (UBT) (since incoherency destroys meaning) good can be understood to be whatever is coherent, what is whole and that reality is choosing not to be evil by resolving its own meaning with respect to constraint. This means that evil will ultimately be destroyed, but that destruction must logically be a process (a series of sequential events) that must take place, it’s logically required for the destruction of evil to occur.

A good way to get and idea of this, in life advice style, is Physicist David Bohm’s discussion on wholeness https://youtu.be/mDKB7GcHNac

Reality As a Mind.

The CTMU proves that reality is a self aware, self conscious construct, aka it is intelligent. In order to show this, one will have to have a definition of what intelligence is. Deep learning is a field of Machine learning that uses many layered neural networks (mathematical objects that approximate the neural networks of the brain, which are mathematical themselves).

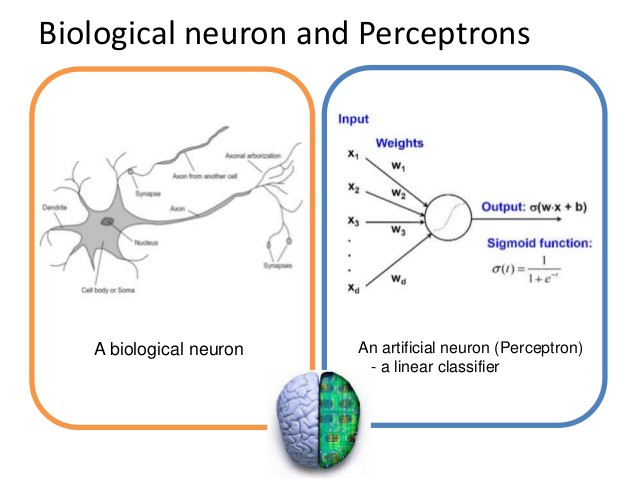

(Shown below, the structural and mathematical similarities between a silicon based neuron and a biological one):

Neurons are mathematical objects that take in inputs (data or information)(see under the input heading in the picture) and combine them with weights (Biases or the bias of the neuron) and outputs a function that is a combination of those inputs and biases with respect to a learning rate. The human brain contains approximately 100 billion of these neurons running in parallel in order to compute an output classification, based on the input it receives and its guess on the relevance of that input (its bias or beliefs).

From this explanation we can understand intelligence to be an information processing and information generating system. As it takes in information (that is information in the sense of certain possibilities selected, to the exclusion of other possibilities that could be selected) applies a mathematical procedure to it, and transforms it into an output state, generating information.

If the brain is processing information, then all of its sub components must be as well (although at different levels of complexity).

So it follows therefore if this type of generalized information processing capability can be show to exist at the foundational blocks of reality (component particles) then reality can be show to be everywhere, at all times, processing and generating information.

Let’s see if we can show that an atom is processing information:

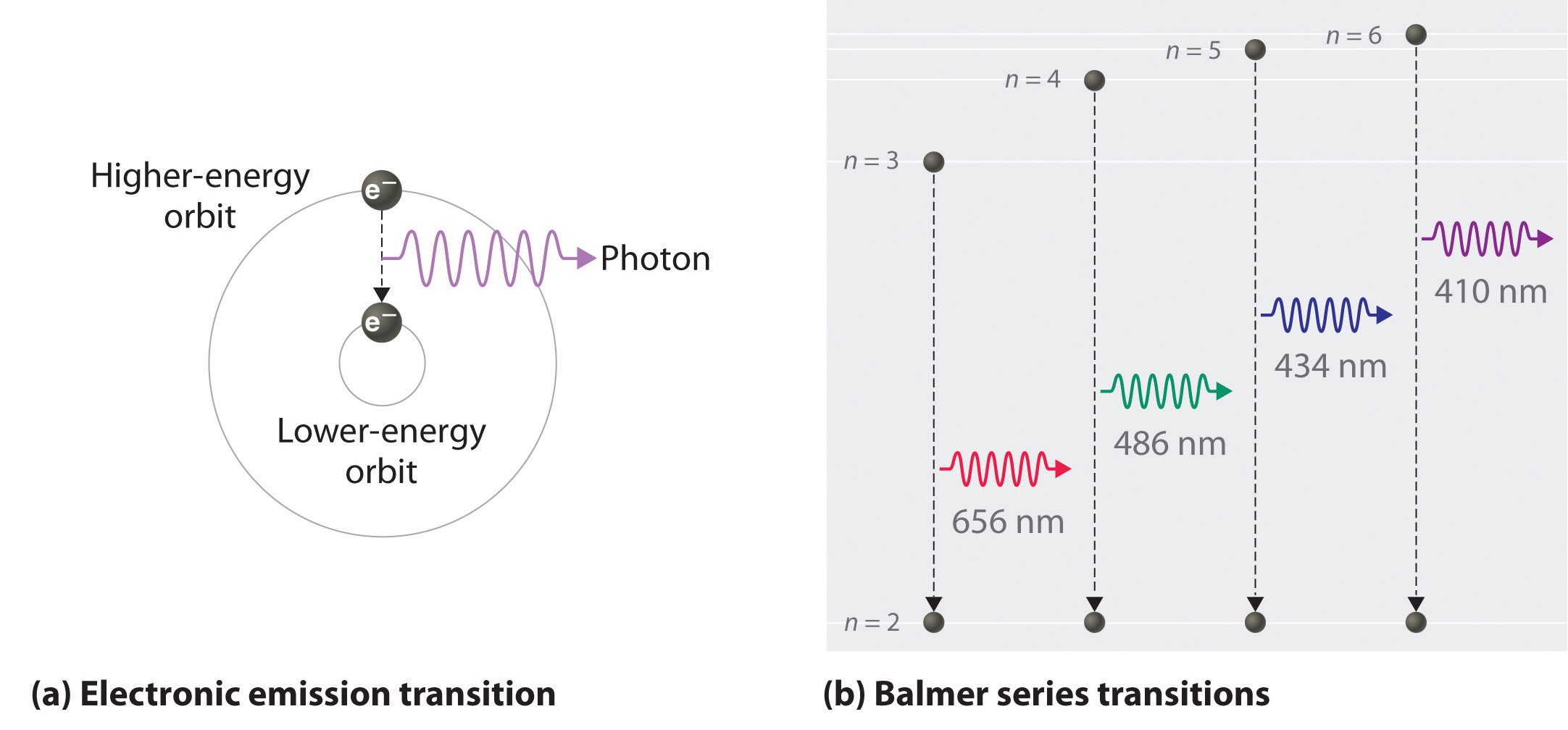

When an electron is struck by electromagnetic radiation (informational input) the electron jumps up or down into a lower or higher energy orbit depending on the wavelength (the type of input) of the radiation it receives. It then outputs (information generation or state transformation) a photon with a wavelength depending on the energy level wavelength of the photon that was inputted into it and therefore the maximal or minimal possible energy it can output (bias).

In this case the bias would be the energy threshold of the atomic system, if it exceeds a certain lower or higher end bound then we will have radioactive decay. This is an example of an invariant limit to what can be actualized in reality, with some hefty room for variance in between.

The bias in the human brain’s neural operators can obviously also be variant (like true or false beliefs) but not past the bounds that are set by matters invariant limits. This is a form of constraint which allows freedom to exist, constraint is necessary for freedom, because to constrain something is to define it, and to define it is to give it rules (the laws of physics). If it were undefined we wouldn’t exist, and reality would collapse back into the primordial realm of UBT.

If an atom wasn’t doing anything like information processing, then brains cannot be doing information processing. The parts make up the whole.

This shows that what atoms are doing is ultimately information processing as well, assigning them a real, yet very base form of intelligence and self awareness. Because the radiation that is being received by the electron of one atom is not coming from another electron on an atom that exists outside of reality, (since reality contains all that is real) this makes reality everywhere, at all times, perceiving itself in an act of contemplation or self modeling (which is what minds do).

Since information has to exist in order to be processed, information must be processing itself, at different variant (Human)and invariant levels (Physics).

This makes reality a stratified intelligent information processing continuum, with the ability to perceive itself distributed everywhere at different levels of capability. To be is to be perceived, to be is to be in communion (communication) with self, being as communion.

Reality is everywhere self similar and self processing, a symmetry.

It exists as a meta-cybernetic system. Meta, meaning something of a higher or second-order kind and Cybernetic, meaning a communications and automatic control system in both machines and living things.

Furthermore since what these processes are doing is ultimately mathematical and mathematics is a language (and language is the only possible way to commune) reality is a Self Processing Self Configuring Language (SCSPL).

Unavoidable Self Reference In Logic.

SCSPL language can be reduced down to its base mathematical logical parts, tautologies (since all language relies on tautologies in order to exist because a tautology is a statement that is true by necessity or by virtue of its logical form).

A ∨ ¬A

1 or 0.

In order to generate information one must select from a tautological matrix, so selecting A out of either A or not A is a mathematical procedure that generates infromation. But where do the tautologies come from that allow information generation? They come from themselves. A or not A can only be reduced to a tautology, meaning tautologies everywhere contain themselves.

As an example:

(A ∨ ¬A) ⇒ ¬(A ∧ ¬A)

For any logical expression to occur, the base prime movers of language expression must necessarily contain themselves, or they will be prevented from allowing any type of coherent expression.

Since they contain themselves they are always self expressing themselves.

This self-inclusive logic validates the proposition “reality contains all that is real” for if the set of all sets cannot contain itself, then even partial containment is impossible, proving logic to be impossible to use to perceive.

A=A.

— — — — — — — — — — — — — — — — — — — — — — — — — — — — — —

This will conclude part 1 of explaining the CTMU, up next will be Russell’s paradox and the supposed problems of self inclusion as well as undeniable proof of your immortality.

This article was originally published by: https://medium.com/@variantofone/explaining-the-ctmu-cognitive-theoretic-model-of-the-universe-163a89fc5841

WTF man, why?

The smartest people in the world should be focused on saving the environment and humanity… Not this BS.

This is fascinating. I had been religious for most of my life (Christian), and in the past several years left the Church for various reasons. However, I couldn’t just turn my back on the concept of God because outright denying the existence of God has never made sense to me because stuff exists. They will say God doesn’t exist because of the Big Bang. But what doesn’t sit with me is that no matter how small we go, down to to subatomic particles, all of it comes down to there being material stuff where there was no material stuff. Just smaller and smaller stuff, but stuff nevertheless; why is there stuff and not no-stuff? So interesting. I love, love this “stuff.” Haha.

I guess my question then would be, why or how, at what point, did the UBT start to choose to organize itself into the rules of reality as we know them? Why at that point and not another?

This is indeed fascinating! I love this “stuff” too haha. Im not sure as to why now. What is “now” anyways? I was a Christian once as well. Extremely committed. Studied world religion. Went on mission teams. Converted and baptized people through intensive in depth bible studies. I will never stop thinking about our existence, why we are here, what we are, who we are etc. But to your question why now, I guess we birthed(not from nothing) ourselves in to existence by our own sheer will. Possibly the collective will of the conscious universe to experience itself. I think an equally logical question to ask would be, why not? It’s also logical to believe that there is no beginning or end to existence but rather it’s all circular. Round and round and round we go. Ever evolving and creating and understanding and experiencing. Honestly not sure, the days(religious days) of claiming to know everything are behind me. But thats the best I got so far in my short existence. Peace.